Latest

May

08

Oxen's Model Report - May 8th, 2026

Welcome back to another iteration of everybody's favorite moooodel report. Every time I sit down to write one

Apr

16

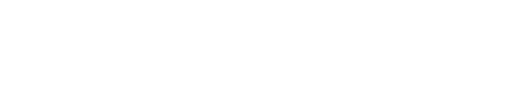

Writing a fine-tuning and deployment pipeline isn't as easy as it looks (Gemma 4 Version)

🚨You're about to embark on a journey of ups and downs and many aha moments. We're

Apr

09

Oxen's Model Report - April 9th, 2026

Welcome back to another iteration of everybody’s favorite moooodel report. In AI, any given day feels like a decade,

Apr

01

When to Fine-Tune an Image Model

💡Curious about fine-tuning multi-modal models? This Friday, we're diving into the new Qwen3.5 series, what

Mar

11

Oxen's Model Report - March 11th, 2026

Welcome back to another iteration of our favorite moooodel report. This week we've got an absolutely packed lineup,

Feb

20

Frank's Red Hot

0:00

/0:45

1×

An AI generated goat rapping alongside Ludacris in Frank's RedHot's "

Feb

20

Isometric.nyc

A giant isometric pixel-art map of New York City, inspired by SimCity 2000 and

Rollercoaster Tycoon. Andy Coenen fine-

Feb

19

Bell Canada

0:00

/0:30

1×

A fully AI generated commercial for the Canadian telecom giant Bell. Made in collaboration with

Feb

17

Oxen's Model Report

Welcome to this week's Oxen moooodel report. We know the AI space moves like crazy. There's

Feb

11

How a $1 Qwen3-VL Fine-Tune Beat Gemini 3

Can a $1 fine-tune beat a state-of-the-art closed-source model?

ModelAccuracyTime (98 samples)Cost/RunBase Qwen3-